In January 2021, as Congress prepared to impeach outgoing President Donald Trump for his role in inciting a mob that attacked the U.S. Capitol on Jan. 6, reports emerged that YouTube had become the latest online platform to take action against Trump.

On Jan. 13, for example, CBS News published a report with the headline "YouTube Temporarily Bans New Videos From Trump Account." The article reported that:

President Trump is facing consequences from yet another social media platform following last week's attack on the U.S. Capitol. YouTube said late Tuesday it had removed new content from Mr. Trump's channel and banned him from uploading any videos or livestreams for at least a week. Comments were also indefinitely banned on the channel.

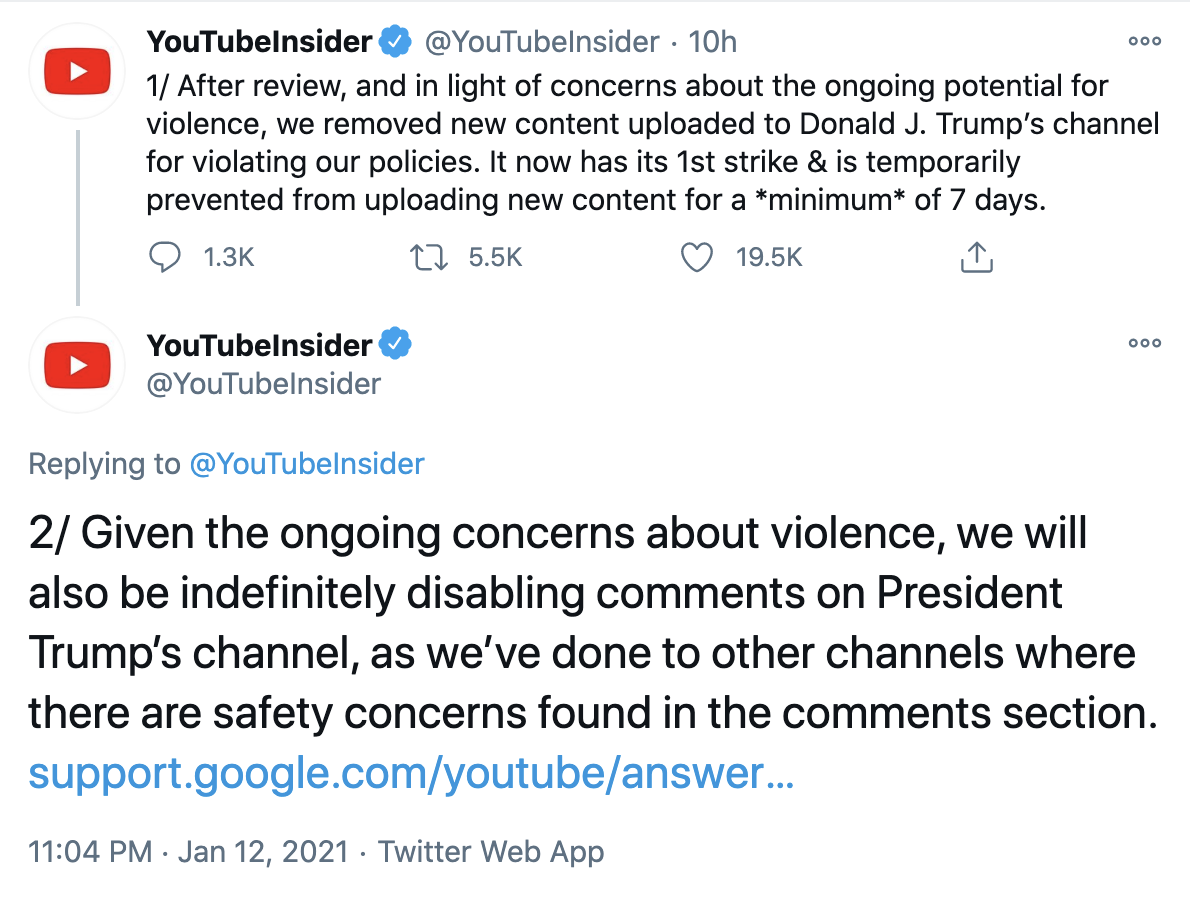

That report, and others like it, were accurate. On Jan. 12, YouTube announced the suspension in a pair of tweets which read:

After review, and in light of concerns about the ongoing potential for violence, we removed new content uploaded to Donald J. Trump’s channel for violating our policies. It now has its 1st strike & is temporarily prevented from uploading new content for a *minimum* of 7 days.

Given the ongoing concerns about violence, we will also be indefinitely disabling comments on President Trump’s channel, as we’ve done to other channels where there are safety concerns found in the comments section.

YouTube told Snopes the video in question had been uploaded on Jan. 12, and had violated the company's policy on inciting violence.

The suspension was the latest in a litany of actions taken by major online platforms and social networks, beginning on the day of the Capitol riots on Jan. 6, when Twitter locked Trump's account for 12 hours and warned he would be banned for good if he continued to violate the platform's policies.

Two days later, Twitter made good on that promise, permanently banning Trump for tweets that the company said violated its prohibition on glorifying violence.

On Jan. 7, YouTube warned it would, thenceforth, be issuing a "strike" to any channels that publish false claims relating to President-elect Joe Biden's certified election victory.

In keeping with YouTube's existing community guidelines, channels that receive a "first strike" are suspended from publishing new content for seven days. If the channel reoffends within three months, it will receive a second strike, and be banned from publishing new videos for two weeks. If a channel breaches YouTube policies three times within three months, it is typically permanently banned.

Also on Jan. 7, Facebook CEO Mark Zuckerberg announced the company had suspended Trump indefinitely, and for at least two weeks, finding that he had used the platform to "condone rather than condemn the actions of his supporters at the Capitol building," and that there was an intolerable risk Trump would use Facebook to "incite violent insurrection against a democratically elected government."

In the aftermath of the attack on the Capitol, the video-streaming platform Twitch also indefinitely froze Trump's account, and the video-sharing app TikTok removed and demoted excerpts from Trump's speech at the "Save America" rally on Jan. 6.