In early 2017 several news outlets reported on a story that owners of Amazon's Alexa digital assistant device owners were "left out of pocket" after a 6-year-old girl named Brooke Neitzel inadvertently triggered one into placing an order for an expensive dollhouse, and a news report on the incident triggered a plague of automated, unwanted dollhouse ordering:

[In January 2017] it was reported that an Amazon Echo in Dallas, Texas, had ordered a $170 dollhouse after six-year-old Brooke Neitzel asked: 'Can you play dollhouse with me and get me a dollhouse?'

Although the little girl apparently meant it as a rhetorical question the device saw it as a command and ordered a KidKraft Sparkle mansion dollhouse, as well as four pounds of sugar cookies.

But to make matters worse many Amazon Echoes apparently picked up on TV anchor Jim Patton's words in the report: 'I love the little girl saying "Alexa ordered me a dollhouse".'

Viewers at home were then left stunned when their own Amazon Echoes picked up the voice requests in the report and ordered dolls houses too.

As voice-command purchasing is enabled as a default on the Alexa devices, viewers found it had mistaken the show for their command and bought the toy.

According to that report, a Texas kindergartner's request for Alexa to "play dollhouse" with her and "get [her] a dollhouse" resulted in her family's being charged for an order for a top-dollar toy. And when the family's tale was reported by a San Diego news station, "stunned" viewers also became the recipients of unwanted dollhouses simply because an anchor repeated the same phrase, which their own Amazon Echo/Alexa devices picked up on and transformed into orders.

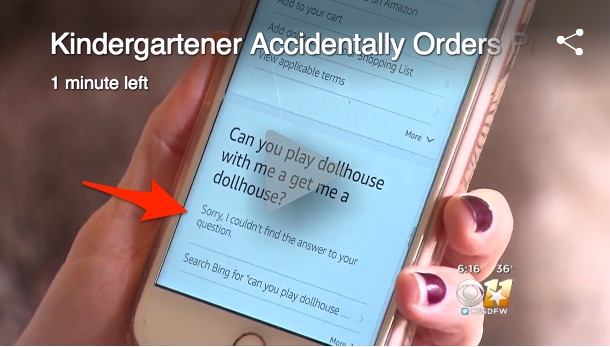

In one interview, Neitzel's mother Megan provided the Alexa log showing that her daughter had asked the device "Can you play dollhouse with me and get me a dollhouse?" But the embedded video of the log suggested that instead of confirming a dollhouse order, Alexa had responded, "Sorry, I couldn't find the answer to your question":

We attempted to replicate the circumstances of the purported inadvertent order and were unable to do so. When asked "can you play dollhouse with me and get me a dollhouse?" our, Alexa responded with "now shuffling songs by Bauhaus." Asking "Alexa, can you get me a dollhouse?" returned "hmm ... I’m not sure what you meant by that question." "Alexa, can you play dollhouse with me?” returned “ ... here’s a sample of 'Dollhouse Sounds' by Dollhouse Incorporated.” “Alexa, can you get me a dollhouse?” was answered by “I wasn’t able to understand the question I heard."

No permutation of "[c]an you play dollhouse with me and get me a dollhouse?" we tried resulted in a prompt to confirm a dollhouse order in any of our experiments conducted with two separate Alexa-enabled devices. The only potential purchase-triggering word appeared to be "order," after which it was necessary to confirm or decline the transaction (which we did at least three times and did not accidentally order any dollhouses).

An Amazon spokesperson told us in response to our inquiry that ordering items through Alexa is a multi-step process that requires confirmation:

You must ask Alexa to order a product and then confirm the purchase with a “yes” response to purchase via voice. If you asked Alexa to order something on accident, simply say “no” when asked to confirm. You can also manage your shopping settings in the Alexa app, such as turning off voice purchasing or requiring a confirmation code before every order. Additionally, orders you place for physical products are eligible for free returns.

This conforms to our own experience: we couldn't induce Alexa into ordering anything without its first showing us the item, displaying a price, and asking us for verbal confirmation. We also couldn't get Alexa to order two items at once, as supposedly happened with the dollhouse and cookies — Alexa always processed multiple items separately no matter how we tried to join them, displaying a price and requiring a verbal confirmation for each item.

We also could find no confirmation (outside of anecdotal repetition) that a spate of unwanted dollhouse orders followed in the wake of San Diego newscaster Jim Patton's report on the story. Perhaps the television audio of that newscast might have woken up some viewers' Alexas, but those viewers would still have had to confirm any potential dollhouse purchases by responding 'yes' to Alexa's request for purchase confirmation. We have yet to locate any of the "viewers at home left stunned" as their Alexa devices foisted undesired $165 toys upon them.