Two researchers (presumably graduate students) from Stanford University and Tilburg University co-authored a paper asserting they uncovered information suggesting widespread primary election fraud favoring Hillary Clinton had occurred across multiple states.

The paper was not a "Stanford Study," and its authors acknowledged their claims and research methodology had not been subject to any form of peer review or academic scrutiny.

On 8 June 2016, the Facebook page "The Bern Report" shared a document authored by researchers Axel Geijsel of Tilburg University in The Netherlands and Rodolfo Cortes Barragan of Stanford University suggesting that "the outcomes of the 2016 Democratic Party nomination contest [are not] completely legitimate:

Are the results we are witnessing in the 2016 primary elections trustworthy? While Donald Trump enjoyed a clear and early edge over his Republican rivals, the Democratic contest between former Secretary of State Hillary Clinton and Senator Bernard Sanders has been far more competitive. At present, Secretary Clinton enjoys an apparent advantage over Sanders. Is this claimed advantage legitimate? We contend that it is not, and suggest an explanation for the advantage: States that are at risk for election fraud in 2016 systematically and overwhelmingly favor Secretary Clinton. We provide converging evidence for this claim.

First, we show that it is possible to detect irregularities in the 2016 Democratic Primaries by comparing the states that have hard paper evidence of all the placed votes to states that do not have this hard paper evidence. Second, we compare the final results in 2016 to the discrepant exit polls. Furthermore, we show that no such irregularities occurred in the 2008 competitive election cycle involving Secretary Clinton against President Obama. As such, we find that in states wherein voting fraud has the highest potential to occur, systematic efforts may have taken place to provide Secretary Clinton with an exaggerated margin of support.

In an appendix, Geijsel and Barragan stated that their research was still in progress and had not yet been subject to peer review, but since the information was highly topical they believed it better to pre-release their findings due to the ongoing primary ballot count in California (among other factors):

Statement on peer-review: We note that this article has not been officially peer-reviewed in a scientific journal yet. Doing so will take us several months. As such, given the timeliness of the topic, we decided to publish on the Bern Report after we received preliminary positive feedback from two professors (both experts in the quantitative social sciences). We plan on seeking peer-reviewed publication at a later time. As of now, we know there may be errors in some numbers (one has been identified and sent to us: it was a mislabeling). We encourage anyone to let us know if they find any other error. Our aim here truly is to understand the patterns of results, and to inspire others to engage with the electoral system.

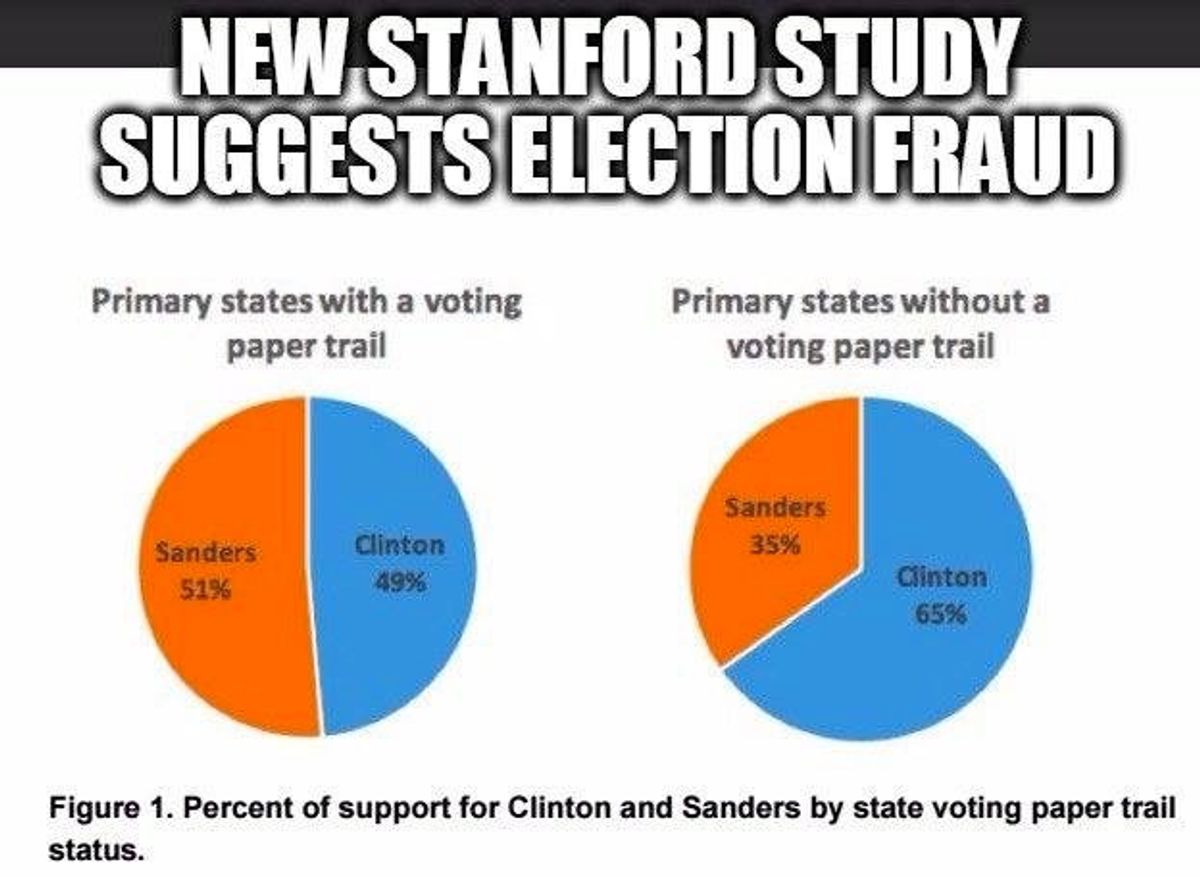

The post-introduction portion of the paper began with a comparison of outcomes in "primary states with paper trails and without paper trails," holding that potentially inaccurate results led the researchers to "restrict [our] analysis to a proxy: the percentage of delegates won by Secretary Clinton and Senator Sanders." After identifying via the Ballotpedia web site 18 states that use a form of paper verification for votes compared to 13 states without such a "paper trail," they concluded that states without "paper trails" demonstrated a higher rate of support for Hillary Clinton:

Analysis: The [data] show a statistically significant difference between the groups. States without paper trails yielded higher support for Secretary Clinton than states with paper trails. As such, the potential for election fraud in voting procedures is strongly related to enhanced electoral outcomes for Secretary Clinton. In the Appendix, we show that this relationship holds even above and beyond alternative explanations, including the prevailing political ideology and the changes in support over time.

The information included in the Appendix didn't explicate exactly what those alternative explanations might be:

Are there other variables that could account for our main effect (states without paper trails going overwhelmingly for Clinton)? We conducted a regression model and included the % of Non-Hispanic Whites in a state as of the last Census, the state’s electoral history from 1992 to 2012 of favoring Democratic or Republican nominees for President (i.e., the “blueness” of a state), and our variable of interest: paper trail vs. no paper trail. As expected, race/ethnicity and political ideology played a role: The Whiter and more liberal a state, the less it favored Clinton. However, the effect for paper trail remains significant. States with paper trails show significantly less support for Clinton. As such, even beyond the potential for other likely factors to play a role, the potential for fraud is associated with gains for Clinton.

Dependent variable: Percent support for Clinton in the primaries

In the paper's second portion, the researchers examined discrepancies between exit polls and final results by state, a subject of debate (hashtagged #ExitPollGate on social media) that antedated the publication of their paper and was addressed in a Nation article disputing the claim that exit polls revealed fraud. The Nation's analysis held that fraud detection exit polling varied significantly from the type of exit polling typically carried out in the United States:

While exit polls are used to detect potential fraud in some countries, ours aren’t designed, and aren’t accurate enough, to accomplish that purpose. [A polling company VP], who has conducted exit polls in fragile democracies like Ukraine and Venezuela, explained that there are three crucial differences between their exit polls and our own. Polls designed to detect fraud rely on interviews with many more people at many more polling places, and they use very short questionnaires, often with just one or two questions, whereas ours usually have twenty or more. Shorter questionnaires lead to higher response rates. Higher response rates paired with larger samples result in much smaller margins of error. They’re far more precise. But it costs a lot more to conduct that kind of survey, and the media companies that sponsor our exit polls are only interested in providing fodder for pundits and TV talking heads. All they want to know is which groups came out to vote and why, so that’s what they pay for.

As well, standard exit polling conducted in the U.S. can be very inaccurate and systematically biased for a number of reasons, including:

o Differential nonresponse, in which the supporters of one candidate are likelier to participate than those of another candidate. Exit polls have limited means to correct for nonresponse, since they can weight only by visually identifiable characteristics. Hispanic origin, income and education, for instance, are left out.

o Cluster effects, which happen when the precincts selected aren’t representative of the overall population. This is a very big danger in state exit polls, which include only a small number of precincts. As a result, exit polls have a larger margin of error than an ordinary poll of similar size. These precincts are selected to have the right balance of Democratic and Republican precincts, which isn’t so helpful in a primary.

o Absentee voters aren’t included at all in states where they represent less than 20 percent or so of the vote.

As the New York Times put it, "[N]o one who studies the exit polls believes that they can be used as an indicator of fraud in the way the conspiracy theorists do."

Nonetheless, Geijsel and Barragan contended in their paper that:

Anomalies exist between exit polls and final results

Data procurement: We obtained exit poll data from a database kept by an expert on the American elections.

Analysis: On the overall, are the exit polls different from the final results? Yes they are. The data show lower support for Secretary Clinton in exit polls than the final results would suggest. While an effect size of 0.71 is quite substantial, and suggests a considerable difference between exit polls and outcomes, we expected that this difference would be even more exaggerated in states without paper voting trails. Indeed, the effect size in states without paper voting trails is considerably larger: 1.50, and yields more exaggerated support for the Secretary in the hours following the exit polls.

The expert whose numbers were utilized for the paper wasn't expressly cited by name, but his moniker appeared on the linked spreadsheet: Richard Charnin. Charnin indeed lists some impressive statistical credentials on his personal blog, but he also appears to expend much of his focus on conspiracy theories related to the JFK assassination (which raises the question of whether his math skills outstrip his ability to apply skeptical reasoning to data).

Geijsel addressed questions about exit poll numbers in a subsequent e-mail to a blogger who was highly skeptical of his research:

In short, exit polling works using a margin of error, you will always expect it to be somewhat off the final result. This is often mentioned as being the margin of error, often put at 95%, it indicates that there's a 95% chance that the final result will lie within this margin. In exit polling this is often calculated as lying around 3%. The bigger the difference, the smaller the chance that the result is legitimate. This is because although those exit polls are not 100% accurate, they're accurate enough to use them as a reference point. In contrast to the idea that probably 1 out of 20 results will differ. Our results showed that (relatively) a huge amount of states differed. This would lead to two possibilities, a) the Sanders supporters are FAR more willing to take the exit polls, or b) there is election fraud at play.

Considering the context of these particular elections, we believe it's the latter. Though that's our personal opinion, and others may differ in that, we believe we can successfully argue for that in a private setting considering the weight of our own study, the beliefs of other statisticians who have both looked at our own study (and who have conducted corroborating studies), and the fact that the internet is littered with hard evidence of both voter suppression and election fraud having taken place.

That blogger passed the anlysis on to his father ("a retired Professor Emeritus in Mathematics and Applied Statistics at the University of Northern Colorado"), Donald T. Searls, Ph.D., for comment:

I simply asked him to review it in full and send me his comments as to its methodology and his view as to its validity. For the record, he has been a Republican for as long as I can recall and has no interest in voting for the Democratic nominee, whoever that might be. I received his response via e-mail today. Here is what he wrote:

I like the analysis very much up to the point of applying probability theory. I think the data speak for itself (themselves). It is always problematic to apply probability theory to empirical data. Theoretically unknown confounding factors could be present. The raw data is in my mind very powerful and clear on its own.

My personal opinion is that the whole process has been rigged against Bernie at every level and that is devastating even though I don't agree with him.

I called him after receiving his response to [ask him to] clarify his remarks on the application of probability theory to the data. His comment to me was that he did not believe it was necessary for the authors to take that step. If he had done the study himself, he would not have bothered with doing so. As he said, the data speaks for itself.

Although Geijsel cited a number of sources to substantiate the claim that fraud was well-documented in the 2016 primary season, most of those citations involved persons with an interest in the overall dispute (such as groups party to lawsuits). That factor doesn't necessarily cast doubt on the researchers' findings, but it highlights that not much independent and neutral verification of their conclusions has occurred yet.