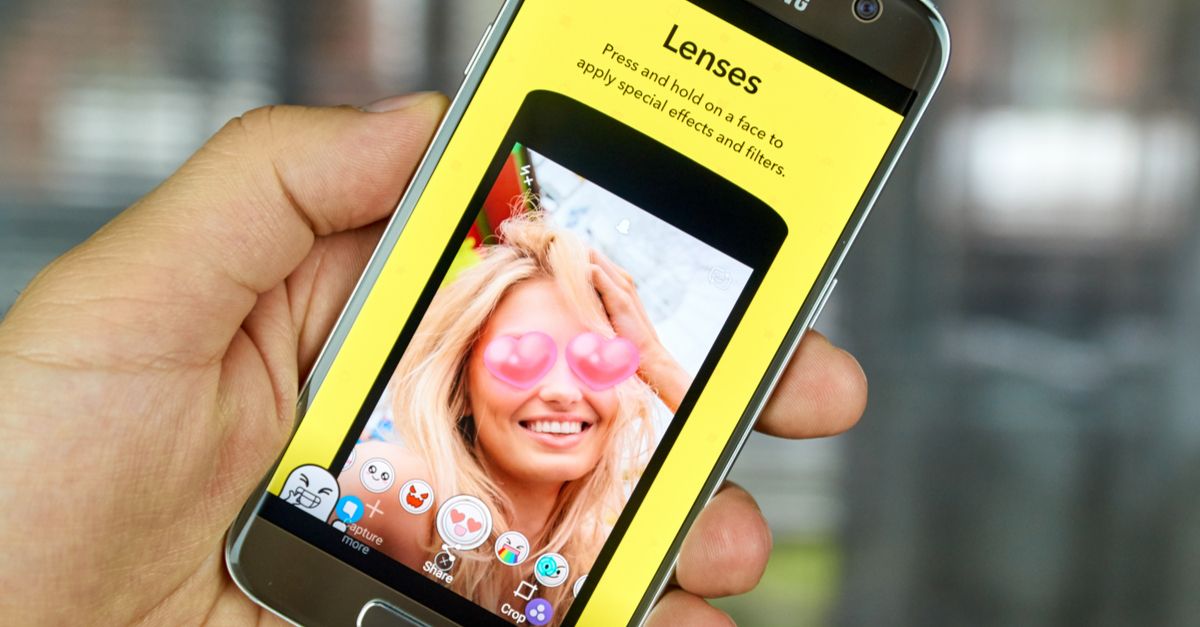

One of the more whimsical messaging options offered by Snapchat — a social media app for mobile devices introduced in 2011 — is the ability to personalize selfies in real time and share them instantly with other users, a feature that has at once contributed to the app's immense popularity (Snapchat boasts an estimated 166 million users daily) and raised privacy concerns among some of its customers.

Snapchat's rotating toolbox of image filters, called Lenses, enables users to manipulate photos and videos to humorous effect, as seen in these examples shared publicly on Instagram by celebrity Snapchatter Chrissy Teigen:

Cute and innocent though it may appear, the feature has become the target of conspiracy theorists claiming that Snapchat's corporate owner, Snap Inc., uses it to collect facial recognition data which it allegedly stores and shares with law enforcement agencies such as the FBI and CIA.

We've found examples of such rumors dating back to Fall 2015 (soon after the Lenses feature was officially rolled out):

you guys are all swooning over the snapchat filters... And The FBI is getting the most extensive facial recognition library ever

— TEENWOLF (@TEENWOLFREMIX) October 3, 2015

It wasn't until April of the following year that the rumors reached takeoff speed, however, thanks largely to a tweet composed by hip hop artist, songwriter, and unabashed flat-earth theorist B.o.B to his roughly two million followers:

when you realize all the snap chat filters are really building a facial recognition database ☕️?

— B.o.B (@bobatl) April 16, 2016

In May 2016, with civil cases already pending against Facebook and Google alleging unauthorized use of facial recognition technology, a class action lawsuit was filed by two Snapchat users in Illinois complaining that the app violated their rights under the state's Biometric Information Privacy Act (BIPA) by failing to obtain adequate permission before gathering and storing their "biometric identifiers and biometric information".

The company flatly denied it:

Contrary to the claims of this frivolous lawsuit, we are very careful not to collect, store, or obtain any biometric information or identifiers about our community.

The class action suit was eventually dismissed in favor of arbitration in September 2016, but as of this writing the case remains unresolved. Crucial to Snapchat's defense is their position, as stated in the Privacy Center of the company's web site, that the app relies on object recognition, not facial recognition, to make Lenses work:

Have you ever wondered how Lenses make your eyes well up with tears or rainbows come out your mouth? Some of the magic behind Lenses is object recognition. Object recognition is an algorithm designed to understand the general nature of things that appear in an image. It lets us know that a nose is a nose or an eye is an eye. But object recognition isn’t the same as facial recognition. While Lenses can recognize faces in general, they can't recognize a specific face.

If it's true that Lenses can't recognize (i.e., identify) specific faces, then the claim that the app produces anything qualifying as a "biometric identifier" under Illinois law is seriously in doubt. (The district judge in Illinois overseeing the Google facial recognition case previously defined "biometric identifier" as "a set of biology-based measurements ... used to identify a person.”)

As to the wider claim that Snapchat is building a "facial recognition database," the distinction between object and facial recognition, at minimum, places a burden of proof on those trumpeting the claim to show that the app is capable of identifying specific faces in the first place. If this explanation (provided by the web site Vox) of how the software works is accurate, Snapchat doesn't need to be able to identify specific faces to accomplish the task. It has to recognize a face as a face, and identify the parts of a face as the nose, eyes, ears, chin, etc., but it doesn't have to recognize who the face belongs to:

Moreover, Snapchat's Privacy Policy states that the company neither collects nor permanently stores user-created content (meaning photos and videos) let alone preserves such items in a database:

Snapchat lets you capture what it’s like to live in the moment. On our end, that means that we automatically delete the content of your Snaps (the photo and video messages that you send your friends) from our servers after we detect that a Snap has been opened by all recipients or has expired.

And although the policy further acknowledges that Snap Inc. may share users' personal information "to comply with any valid legal process, governmental request, or applicable law, rule, or regulation" (and transparency reports show that the company has indeed complied with such requests in the past), they can't grant the FBI (or any other agency) access to a "facial recognition database" that doesn't exist.

Some rumors die hard, however. An updated variant that cropped up in early 2017 brought two new claims to the mix: one, that the FBI literally created Snapchat's image filtering software (and alleged facial recognition database); and two, that there is a smoking gun to prove it — namely U.S. patent #9396354:

The patent does exist (it was granted to Snapchat in July 2016), and it does describe an innovative use of facial recognition technology, but with respect to whether or not Snapchat's image and video filters are a covert means of building a facial recognition database, it's a red herring.

According to an analysis by Sophos' Naked Security blogger Alison Booth, the patent proposes using facial recognition software to identify individual subjects in photos, whereupon the latter would be modified and/or their distribution restricted in accordance with the subjects' pre-established privacy settings.

There is a catch. Implementation of the process would, of course, require amassing a facial recognition database. "For facial recognition to work," writes Booth, "Snapchat would need to store images of all users that sign up to the feature — as a reference image to compare photos against."

So, there it is — a "facial recognition database" of the sort conspiracy theorists have been going on about since 2015, except that Snapchat has not, to date, implemented such a feature (a fact we were able to confirm with the company), nor is there evidence that the FBI (or any other law enforcement agency) was involved in creating it, nor does the patent itself mention sharing facial recognition data with government entities.

Despite finding no legitimate basis for the claim that Snapchat is currently engaged in collecting, storing, or sharing facial recognition data on its users, we do not wish to downplay the increasing prevalence of facial recognition technology in both commercial and government applications, nor the privacy issues this raises.

Sen. Al Franken (D-Minnesota) articulated some of these issues in a statement announcing the release of a 2015 Government Accountability Office (GAO) report on the privacy implications of the technology:

The newly released report raises serious concerns about how companies are collecting, using, and storing our most sensitive personal information. I believe that all Americans have a fundamental right to privacy, which is why it's important that, at the very least, the tech industry adopts strong, industry-wide standards for facial recognition technology. But what we really need are federal standards that address facial recognition privacy by enhancing our consumer privacy framework.

The tech industry has yet to address these concerns to the satisfaction of consumer privacy watchdogs, however, nor has Congress made progress toward establishing the federal standards Franken called for. Thus far, issue has been dealt with primarily in the court system via cases such as the aforementioned BIPA class action lawsuits against Facebook and Google.

One of the ironies of the false alarms about Snapchat's alleged sharing of facial recognition data with the FBI is that the agency already maintains a biometric data network comprising the facial images of more than 117 million Americans (about half the U.S. adult population, and growing), mostly drawn from state DMV databases and other non-criminal sources. A 2016 report by the Georgetown Law Center for Privacy and Technology warned that the technology is both error-prone, with a disproportionate impact on communities of color, and almost totally unregulated.

In testimony before a Senate Judiciary subcommittee hearing chaired by Sen. Franken in 2012, Electronic Frontier Foundation attorney Jennifer Lynch urged Congress to act sooner rather than later to protect the biometric privacy of all Americans:

Face recognition and its accompanying privacy concerns are not going away. Given this, it is imperative that government act now to limit unnecessary biometrics collection; instill proper protections on data collection, transfer, and search; ensure accountability; mandate independent oversight; require appropriate legal process before government collection; and define clear rules for data sharing at all levels. This is important to preserve the democratic and constitutional values that are bedrock to American society.